The amount of gates that are used to read the instruction from memory, figuring out what it does, and routing the input and output values far outweigh the number of gates involved in actually executing an instruction like AND. There is absolutely no reason to minimize the number of gates involved in executing an instruction. I don't know if modern CPUs have such microcode, but I'd bet they have better uses for that die space than a somewhat rare operation that can be easily emulated through other logical operations. But again: this wouldn't likely save any cycles, makes the CPU more complicated, and in any case would be unobservable from the outside. Of course, the decoding pipeline might recognize a pattern like and a, b not a and issue a NAND microcode instruction in its place. Adding very small instructions is not generally worth it. Every instruction complicates the decoding pipeline. But those are fairly big assumptions that have to be balanced with the complexity of the instruction set. if this would save power or lead to more compact programs. However, adding a NAND instruction could still be worth it e.g. On this scale, an AND and NAND operation would likely take the same number of cycles. This number depends on the complexity of the operation.

#Nand x alternative code

Per the instruction set specification, the CPU has a certain number of cycles to execute a machine code operation. The point is that every instruction is touched by millions of transistors before it is actually executed. the CPU performs a hardware-assisted just-in-time re-compilation. This decoding is controlled largely by firmware, i.e. And then finally the microcode is dispatched on specialized hardware circuits. Actually, the machine code instructions are usually decoded into microcode, a CPU-internal instruction set.

Instructions in this pipeline are decoded and partially executed ahead of time. When the CPU executes machine code, the machine code is handled in a pipeline.

All of these approaches already introduce substantial overhead that goes beyond what would be saved by a simpler circuit. This might happen through an ahead of time compiler, or a JIT compiler immediately before execution, or the program might be interpreted without compilation. High-level programs have little connection to circuits on a chipįirst, our program must be converted to machine code. While some microarchitecture details definitely have a high-level performance impact, the ability to specify a NAND operation wouldn't be one of them. There are so many levels in between the source code of some program and the circuits on a chip.

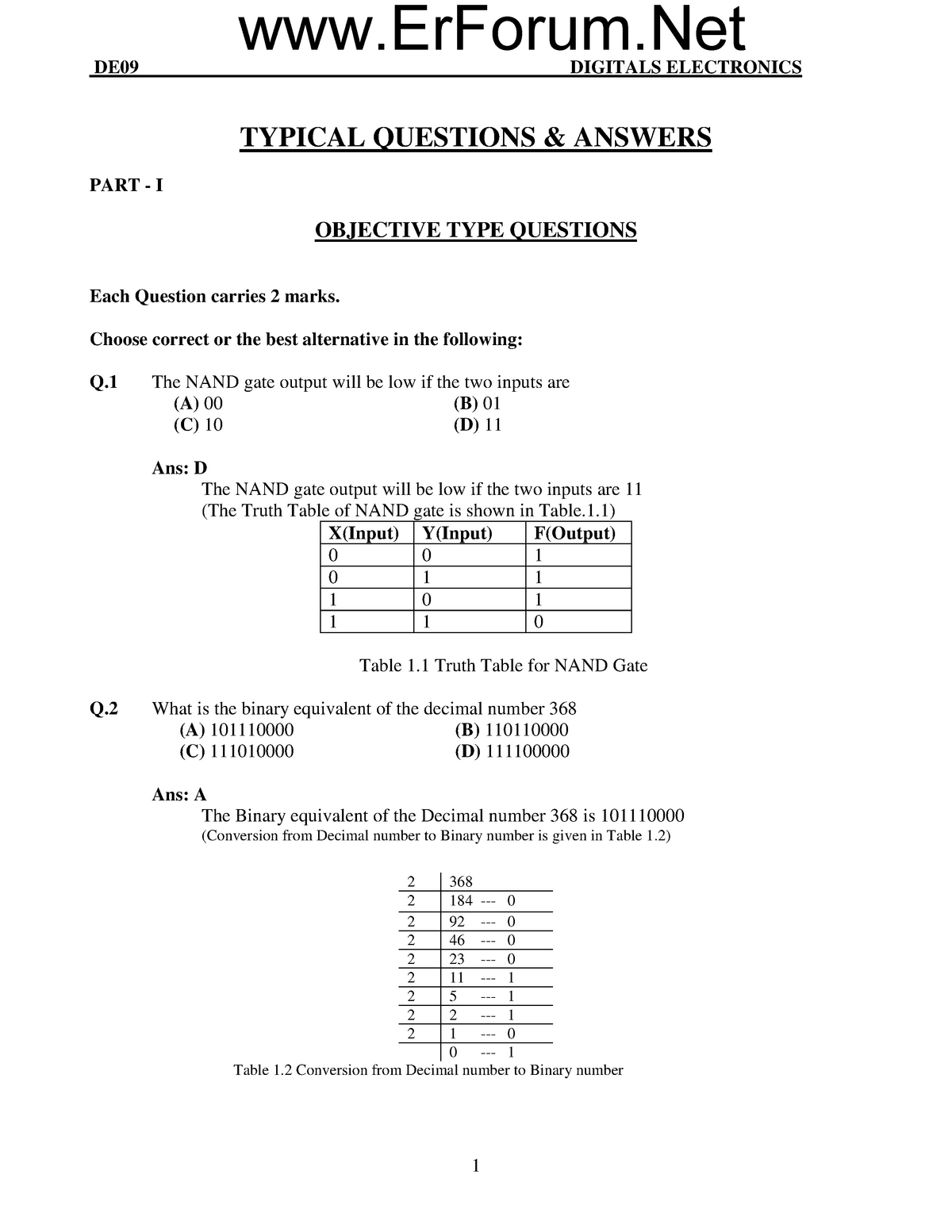

For example, processors compose NAND gates like this to create these operators: A AND B = ( A NAND B ) NAND ( A NAND B ) since these are the languages which actually implement golfing languages.Īs far as I know, modern processors are made up of NAND gates, since any logic gate can be implemented as a combination of NAND gates. I know that some golfing languages like APL have a dedicated NAND operator, but I'm thinking about languages like C, C++, Java, Rust, Go, Swift, Kotlin, even instruction sets, etc.